Individual DYS19 DXYS156Y DYS389m DYS389n DYS389p

1 H1 14 12 4 12 3

2 H3 15 13 4 13 3

3 H4 15 11 5 11 3

4 H5 17 13 4 11 3

5 H7 13 12 5 12 3

6 H8 16 11 5 12 3Bayesian inference

Advanced Statistics and Data Analysis

Introduction

The Frequentist Phylosophy

The statistical methods discussed so far are known as frequentist.

The frequentist point of view is based on the following ideas:

Probabilities are objective properties of the real world: they are defined as the limit of the relative frequencies in infinite many trials.

Parameters are fixed, unknown constants.

As a consequence, a frequentist methods are designed to have good long-run frequency properties.

Example

A \(95\%\) confidence interval is defined so that it includes the true value of the parameter \(95\%\) of the times if we repeat the experiment an infinite number of times.

Note that it is not the parameter but the interval to be random!

The Bayesian Phylosophy

The Bayesian approach takes a very different point of view:

Probabilities describe a degree of belief: they are not objective properties of the world, but rather subjective propositions.

Parameters are random variables: there is no such thing as the true parameter, all we know is the probability distribution of its values.

As a consequence, inference in a Bayesian setting takes the form of an “updated” distribution of the parameter: we can then extract point estimates and intervals from this distribution.

Model specification

In frequentist inference, we need a model for the data generating distribution (i.e., the likelihood).

In the Bayesian framework, in addition to the likelihood, we need to specify a prior distribution for the parameter(s).

This prior distribution elicit our prior belief in what the value of the parameter should be.

Inference is carried out by updating the prior distribution with the new knowledge that we observed in the data, to form the posterior distribution.

Bayes’ Theorem

Probability

A fair coin is tossed twice. What is the probability of observing exactly one Head?

The sample space is \(S=\{HH,HT,TH,TT\}.\) Because the coin is fair, each of the four outcomes in \(S\) is equally likely.

Let \(A\) denote the event that exactly one Head is observed. Then, \(A = \{HT, TH\},\) and \[P(A) = \frac{\#(A)}{\#(S)} = \frac{2}{4} = 0.5.\]

Conditional Probability

A fair coin is tossed twice. Suppose that we toss the coins once and we observe Tail. What is now the probability of observing exactly one Head?

We denote with \(B\) the event that we observed (that the first toss is Tail), and with \(A\) the event that exactly one Head is observed.

As before, \(A = \{HT, TH\}\), but we have \(B = \{TH, TT\},\) so the only event in \(A\) that is compatible with \(B\) is \(\{TH\}\).

We hence computed the conditional probability of the event \[ P(A | B) = \frac{\#(A \cap B)}{\#(S \cap B)} = \frac{1}{2} = 0.5. \]

What would be the conditional probability if the first coin was Head?

Conditional Probability

If \(A\) and \(B\) are events, and \(P(B) > 0\), the conditional probability of \(A\) given \(B\) is \[P(A|B) = \frac{P(A\cap B)}{P(B)} .\]

The same equality can be written as: \[P(A|B) P(B)= P(A\cap B).\]

Example: COVID-19 test

Thanks to Laura Ventura

Suppose that we perform a test to check whether we have contracted COVID-19. The possible observable outcomes are two: we have contracted the virus \((D),\) or we have not \((H)\).

From the latest statistical analyses, we can estimate the prevalence of the disease in the population, \(P(D)\), i.e., the probability that an individual selected at random from the population is infected.

Diagnostic Test

A test is designed to detect the presence of the SARS-CoV-2 virus in the nose and throat. This test also has two possible outcomes: positive for the presence of viral RNA \((+),\) negative for the presence of viral RNA \((-)\).

Because diagnostic procedures undergo extensive evaluation before they are approved for general use, we have a fairly precise notion of the probabilities of a false positive, i.e., the probability of obtaining a positive test result given that the patient is not infected, and a false negative, i.e., the probability of obtaining a negative test results given that the patient is infected.

These probabilities are conditional probabilities: the probability of a false positive is \(P(+|H),\) and the probability of a false negative is \(P(-|D)\).

Predictive Value of the Test

The gold standard test for COVID-19 has a false positive and false negative rate of about \(5\%\).

Assume that there is a COVID-19 prevalence of about \(8\%\) in the Italian population.

Assume \(P(D) = .08,\) \(P(+|H) = .05\) and \(P(-|D) = .05\).

(This implies \(P(H) = .92,\) \(P(-|H) = .95\) and \(P(+|D) = .95\).)

What is the predictive value of the test, i.e., the probability that we don’t have COVID-19 if the test is negative?

The question asks to compute the quantity \(P(H|-).\) By definition, we have \[P(H|-) = \frac{P(H\cap -)}{P(-)} = \frac{P(-|H) P(H)}{P(-)}.\]

Computations

We know that \[P(H \cap -) = P(H) P(-|H) = 0.92 \times 0.95,\]

\[P(D \cap -) = P(D) P(-|D) = 0.08 \times 0.05,\]

\[P(H \cap +) = P(H) P(+|H) = 0.92 \times 0.05,\]

\[P(H \cap -) = P(H) P(-|H) = 0.92 \times 0.95,\]

\[P(-) = P(D \cap -) + P(H \cap -)=0.08 \times 0.05 + 0.92 \times 0.95.\]

Therefore, \[P(H|-) = \frac{P(H\cap -)}{P(-)} = \frac{0.92 \times 0.95}{0.08 \times 0.05 + 0.92 \times 0.95}=0.995.\]

Bayes’ Theorem

Note that we have just used one of the most important theorems in modern statistics, Bayes’ Theorem.

The theorem states: \[ P(B | A) = \frac{P(A | B) P(B)}{P(A)}. \]

In words, if we know the conditional probability of \(A\) given \(B\), in addition to the marginal probabilities of the two events, we have a rule to compute the conditional probability of \(B\) given \(A\).

Example: births in Paris

Laplace was the first to formulate a statistical problem in Bayesian terms.

The question he poses was whether the probability of a female birth is or is not lower than 0.5.

Data: in Paris, from 1745 to 1770 there were 493,472 births, of which 241,945 were girls.

A frequentist solution

We can assume a binomial model and get a frequentist estimate for the probability of a female birth as \(\hat\theta = \frac{241,945}{493,472} = 0.4903\)

We can also compute a confidence interval \([0.4889, 0.4917]\)

Finally, we can test the null hypothesis \(H_0: \theta = 0.5\) and reject it as the obtained p-value is close to 0.

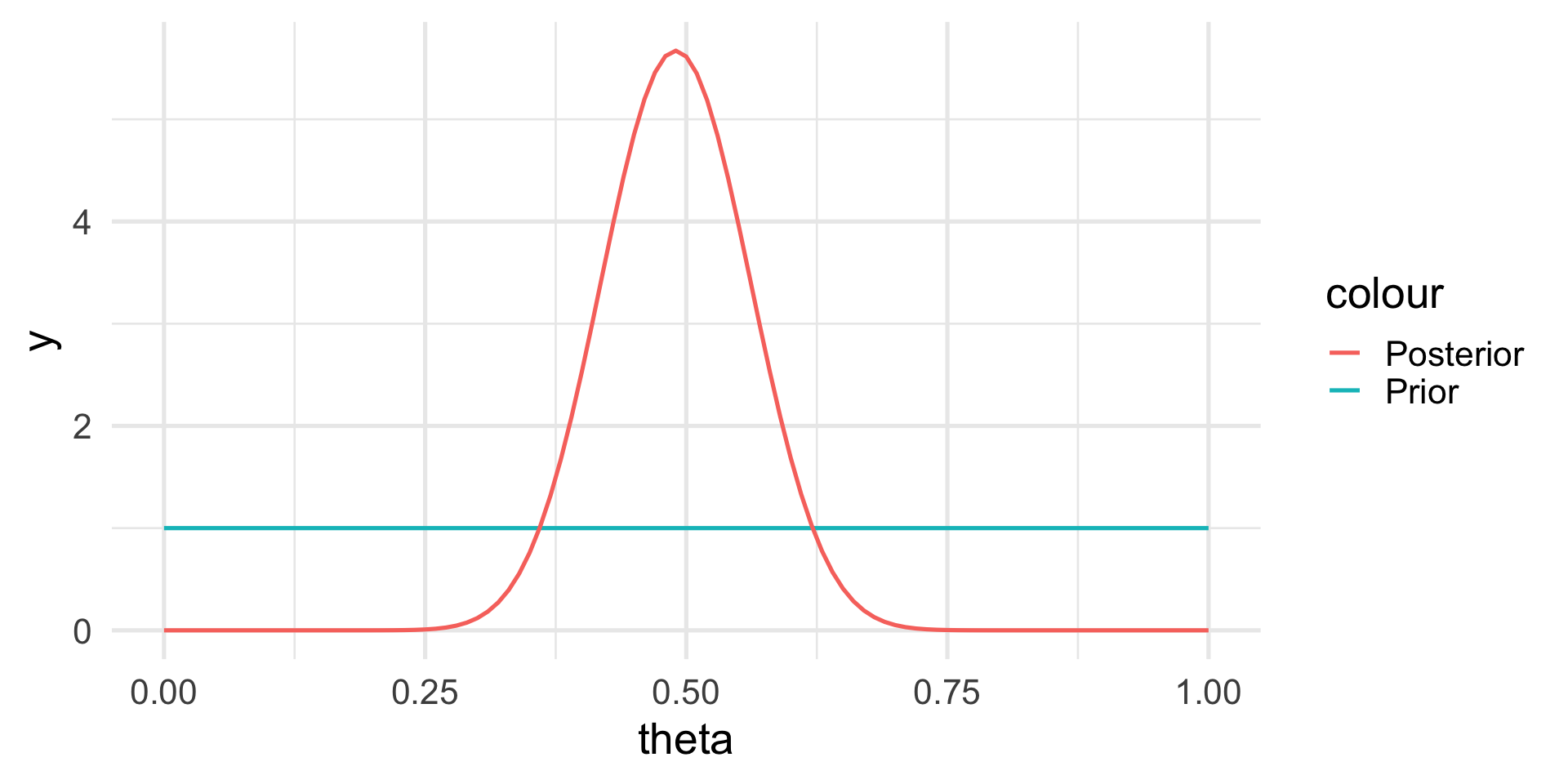

A Bayesian solution

Laplace argues that he has no reason to a priori believe that any value of \(\theta\) is more likely than others.

Hence, the prior probability is \(p(\theta) \sim \mathcal{U}(0, 1)\).

We can prove that in such case the posterior distribution is

\[ p(\theta | \text{data}) \propto \theta^{241,945} \, (1-\theta)^{493,472 - 241,945} \]

The posterior distribution

Bayes’ Theorem revisited

In statistics, we can rethink the Bayes’ Theorem in terms of the data and the parameter: \[ p(\theta | y) = \frac{p(\theta) \, p(y | \theta)}{p(y)}, \] where:

- \(p(\theta)\) is the prior distribution of the parameter

- \(p(y | \theta)\) is the distribution of the data given a particular value of the parameter (i.e., the likelihood)

- \(p(y)\) is the marginal distribution of the data (across all possible values of the parameter)

Note

Much of Bayesian statistics deals with ways to compute/approximate \(p(y)\).

Example: Haplotype frequencies

A haplotype is a collection of alleles (DNA sequence variants) that are spatially adjacent on a chromosome, are usually inherited together (recombination tends not to disconnect them), and thus are genetically linked. In this case we are looking at linked variants on the Y chromosome.

We are interested in the frequencies of particular Y-haplotypes that consist of a set of different short tandem repeats (STR). The combination of STR numbers at the specific locations used for DNA forensics are labeled by the number of repeats at the specific positions.

Example: Haplotype frequencies

The data look like this:

For instance, haplotype H1 has 14 repeats at position DYS19, 12 repeats at position DXYS156Y, etc.

Suppose we want to find the underlying proportion \(\theta\) of a particular haplotype in a population of interest, by haplotyping \(n=300\) men; and suppose we found H1 in \(y = 40\) of them.

Frequentist approach

We can assume that the data generating distribution is \(Y \sim \mathcal{Bin}(n, \theta)\), with \(\theta\) fixed and unknown.

By now we know that the maximum likelihood estimate of \(\theta\) is \(\hat{\theta} = 40/300 = 0.13\).

We can compute confidence intervals and test hypotheses on the true value \(\theta\).

Bayesian approach

In a Bayesian setting, \(\theta\) is not fixed, and we need to assume a prior probability distribution for the parameter as well.

In principle we could use any distribution.

It is convenient to specify a Beta distribution, i.e.

\[ p_{\alpha, \beta}(x) = \frac{x^{\alpha-1} (1-x)^{\beta-1}}{B(\alpha, \beta)}, \] where \(B(\alpha, \beta) = \frac{\Gamma(\alpha) \Gamma(\beta)}{\Gamma(\alpha+\beta)}\) is called the beta function.

Prior distribution

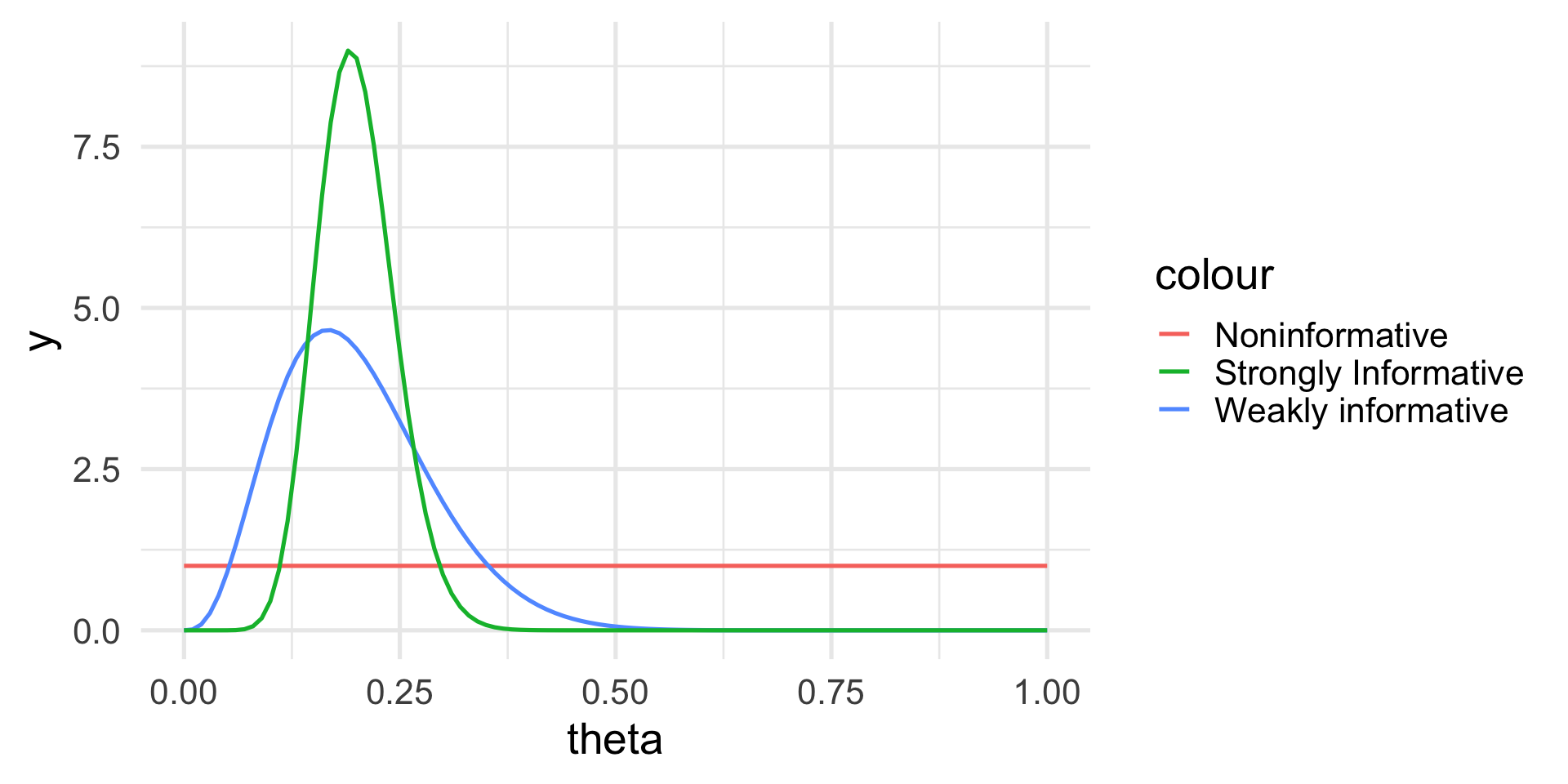

We can see how the prior distribution depends on two parameters, in this case \(\alpha\) and \(\beta\).

These are known as hyper-parameters and are typically chosen according to some prior information.

The choice of a Beta distribution in this case is mathematically convenient, as one can show that the posterior distribution will be again a Beta distribution.

We say that the Beta distribution is the conjugate prior for the binomial model.

How to choose the hyperparameters

The hyperparameters are our way to express our prior knowledge of the problem.

An intuitive way is to think about the prior mean and variance as a way to elicit what is the value that we expect before performing the data (mean) and how confident we are in our prediction (variance).

The Beta distribution is quite flexible in that it includes the uniform distribution (absence of prior information) as well as very concentrated distributions.

How to choose the hyperparameters

If \(X \sim \mathcal{Beta}(\alpha, \beta)\), then \[ E[X] = \frac{\alpha}{\alpha + \beta} \] and

\[ Var(X) = \frac{\alpha\beta}{(\alpha + \beta)^2(\alpha+\beta+1)} \]

Example: Haplotype frequencies

Let’s think about three situations:

We have no prior knowledge on the haplotype frequencies in the population: we can choose a uniform distribution.

We know from the literature that one in five men should have haplotype H1, but we are not very confident.

We are very confident that one in five men has haplotype H1.

Example: Prior distribution

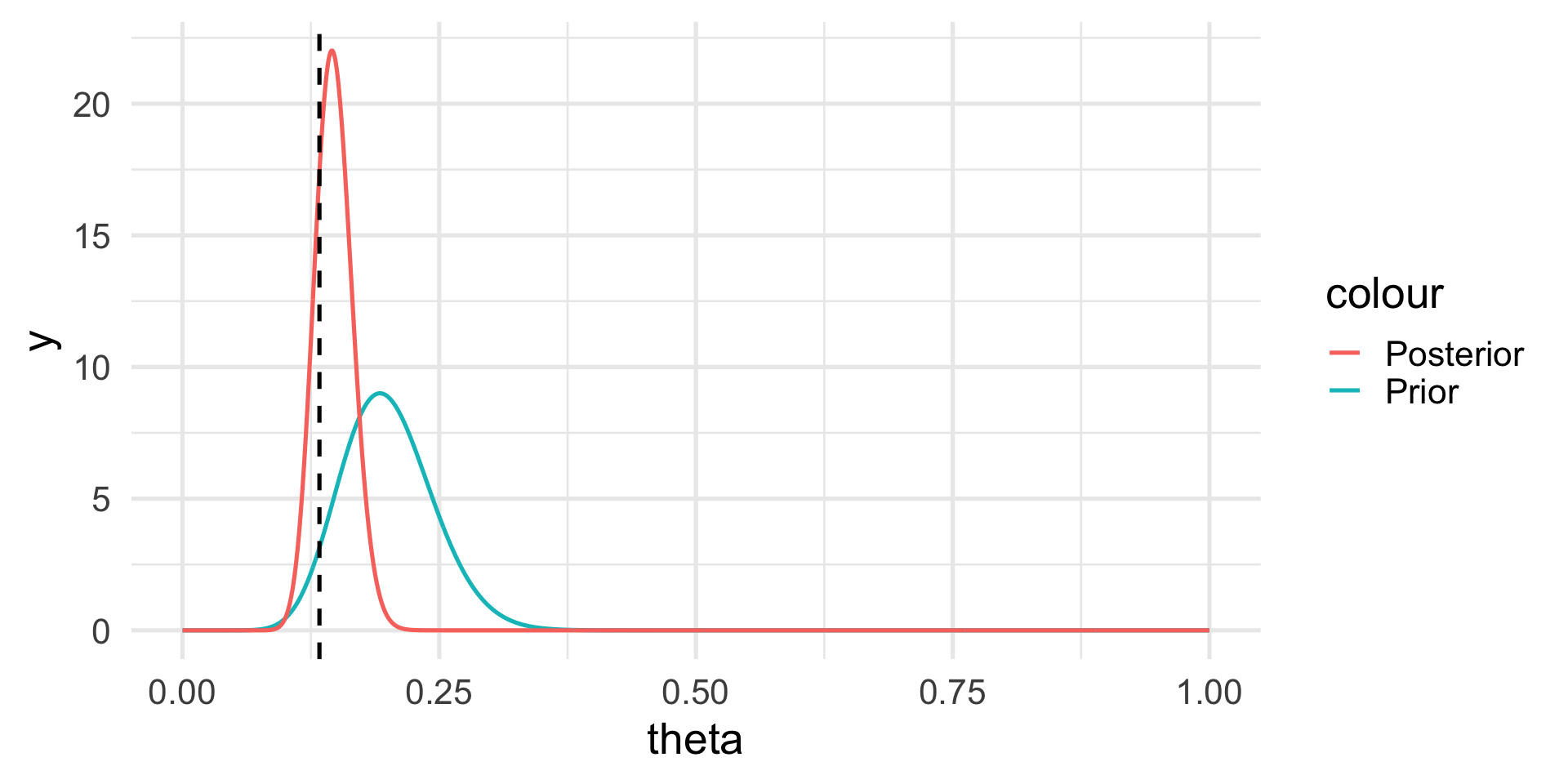

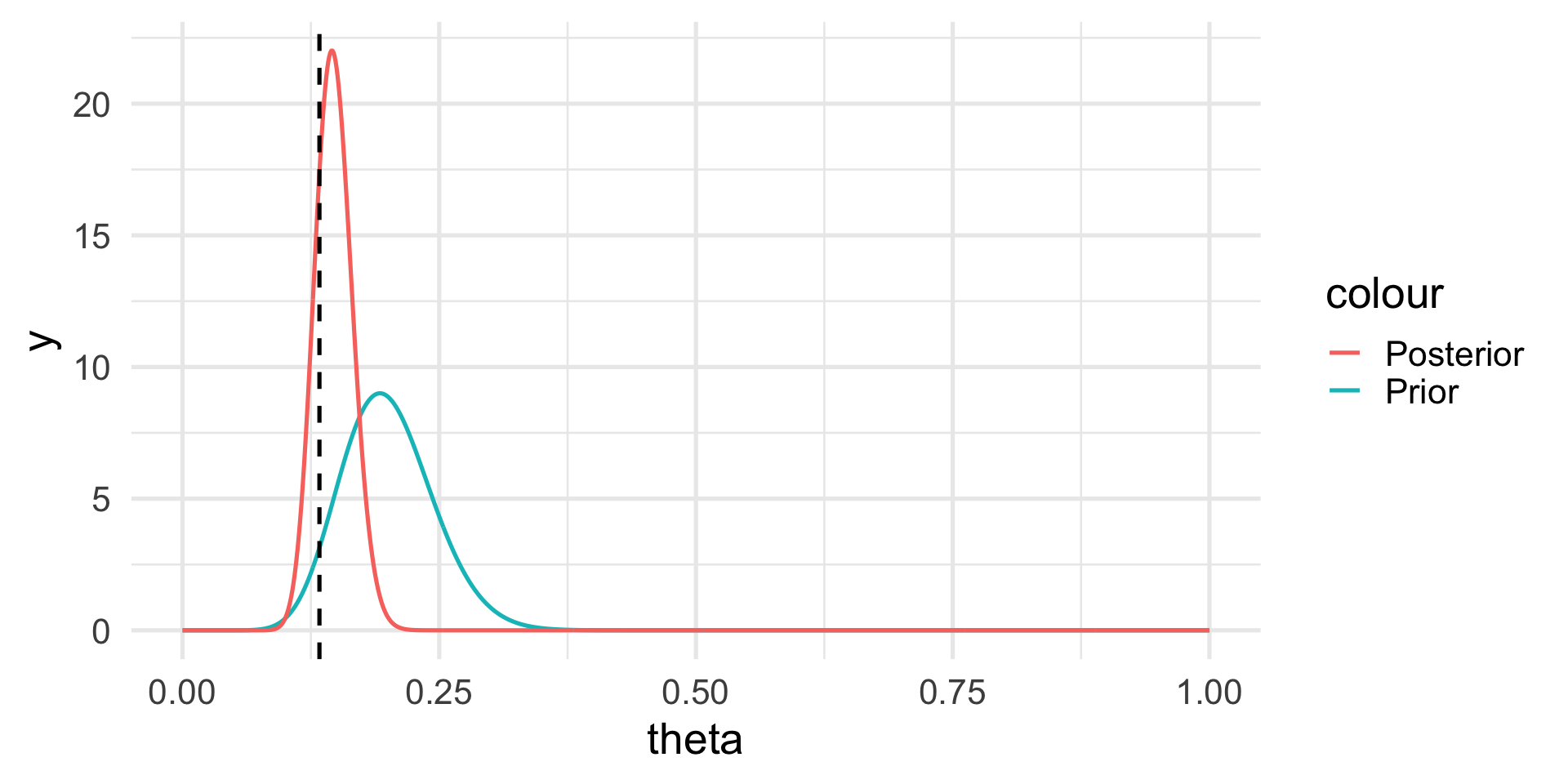

The posterior distribution

If the prior is Beta and the likelihood is Binomial, the posterior distribution is still Beta, but with updated parameters: \[ p(\theta | y) \sim \mathcal{Beta}(\alpha + y, \beta + n - y). \]

Hence, \[ E[\theta | y] = \frac{\alpha+y}{\alpha + \beta + n}, \]

which is a synthesis of the prior information and the information contained in the data.

The posterior distribution

The posterior distribution

Note that we do not have a single answer for the “best” value of \(\theta\) but a distribution.

However, we can calculate the posterior mean and probabilities based on the posterior distribution, e.g., \(Pr(\theta > 0.2) \approx 0.003\).

The posterior distribution

Let’s look again at the posterior distribution: \[ \theta | y \sim \mathcal{Beta}(\alpha + y, \beta + n - y). \]

As we increase the sample size, we give more weight to the data.

Conversely, if we increase the hyperparameters we give more weight to our prior belief.

Credibility intervals

The Bayesian analog of the confidence intervals are the credibility intervals.

These are much more natural to think about: they are straightforward to interpret in terms of the posterior probability of the parameter.

For instance, we can use quantiles to compute the range in which the parameter lies with \(95\%\) probability.

\[ Pr(q_{2.5} \leq \theta \leq q_{97.5}) = 0.95 \]

Example: Haplotype frequencies

Putting it all together, we can specify our Bayesian model as:

The data generating distribution is \(y|\theta \sim \mathcal{Bin}(n=300, \theta);\)

The prior distribution is \(\theta \sim \mathcal{Beta}(\alpha = 16, \beta = 64);\)

Observing \(y=40\) leads to the posterior distribution \[ \theta | y \sim \mathcal{Beta}(16+40, 64 + 300 - 40). \]

Example: Haplotype frequencies

The posterior mean is

\[ E[\theta | y] = \frac{\alpha+y}{\alpha + \beta + n} = 0.147 \]

(compared to a MLE of \(\hat\theta = 0.13\)).

Not just Beta

Many of the concepts that we have seen in this example extend to many other distributions!

For instance if we have Gaussian data, a Gaussian prior on the mean will lead to a Gaussian posterior.

This is true for the linear model too!

Not just conjugate priors

There are many different choices of prior distributions, and when closed form solutions do not exist to compute the posterior, we need to use numerical approximations or simulation approaches.

Markov Chain Monte Carlo (MCMC) and Variational Approximations are perhaps the two most popular techniques.